Client Work

Personal Projects

Scaling compliant learning content across thousands of apprentices

Multiverse is the UK's leading apprenticeship provider, a tech unicorn valued at over $1 billion, with 20,000 learners on programmes in AI, data, and software engineering. Every programme must meet strict UK apprenticeship standards. Content has to be accurate, compliant, and consistent at scale. As Product Designer for Learning Architecture, I designed a suite of tools to fix a production process that couldn't keep up with the business.

A single learning unit took roughly 40 hours to produce. 73% of that time sat in two phases: writing and review.

The workflow was fragmented:

Pathway management was in the same shape:

Employers wanted tailored courses. The tooling made that nearly impossible.

The constraint wasn’t the people. It was the infrastructure they were working inside.

Rather than fixing individual steps, I redesigned the system around three principles:

The goal wasn’t just efficiency. It was to make content production scalable.

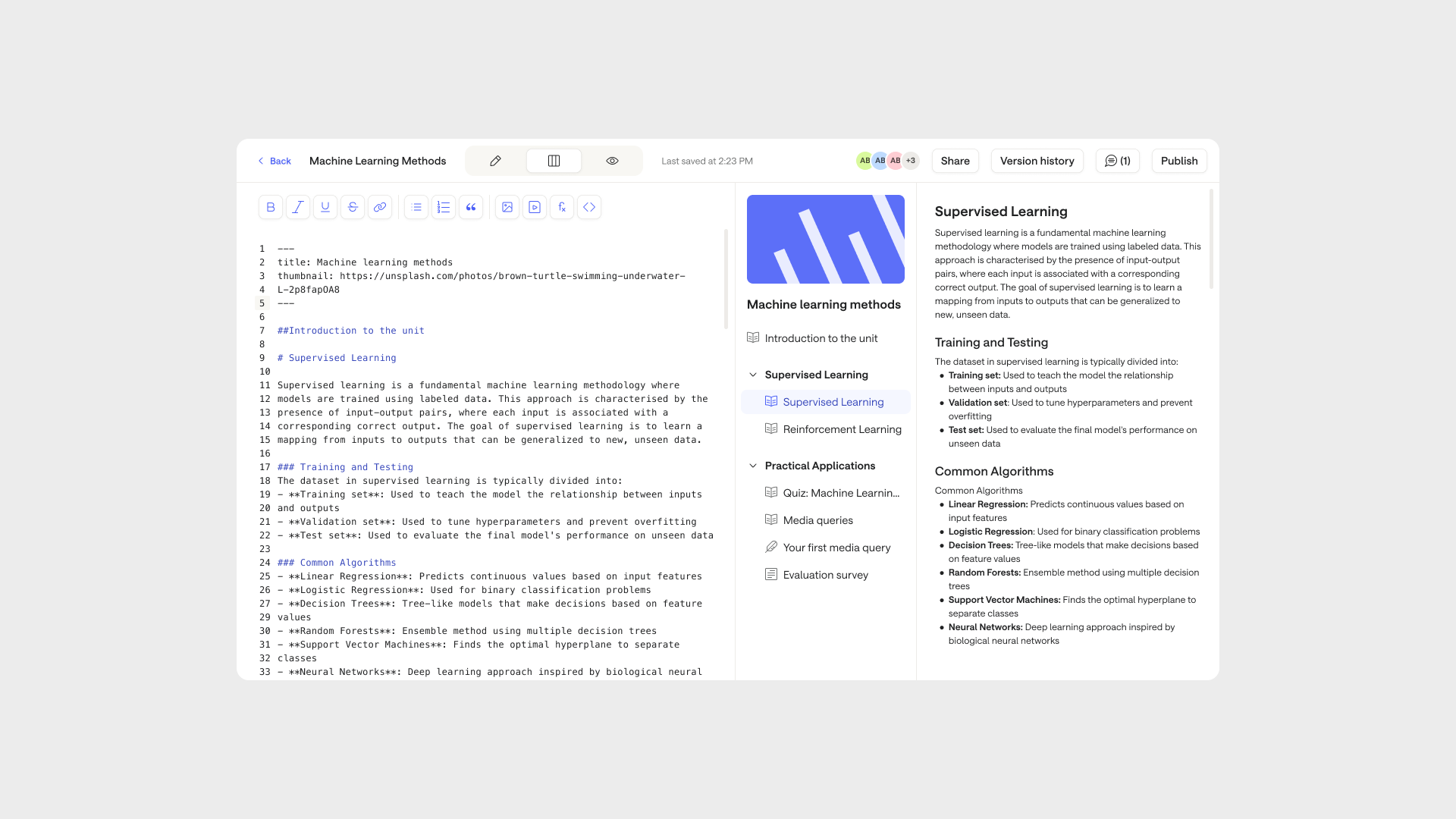

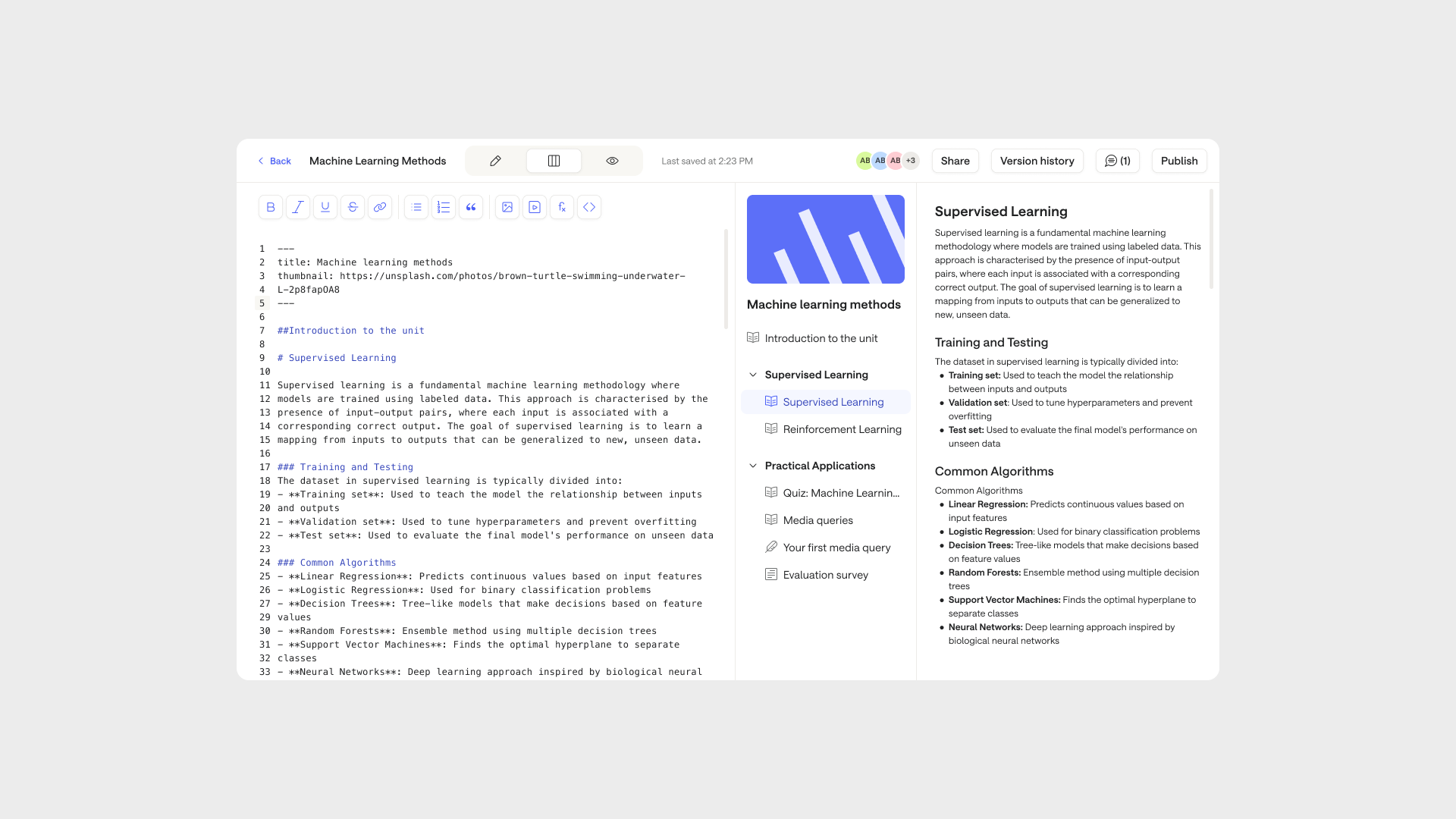

A 0 to 1 product built from scratch. A collaborative editor for creating and refining learning content, replacing a fragmented process of Google Docs, manual copy-paste, and spreadsheet QA.

Designers can generate an AI draft directly in the editor, based on the competencies and learning objectives they select. From there, they work through the content section by section, reviewing and refining as they go:

No copying. No reformatting. No parallel tracking.

Alongside the design work, I helped shape the release plan and go-to-market strategy, working with product and leadership to define how we’d phase the rollout, manage the transition from the old workflow, and bring learning designers along with the change.

Multiverse has a large and constantly evolving content catalogue. Units need to stay accurate, compliant, and up to date as standards change and programmes expand.

Compliance checks were manual and happened late. Problems were often caught after significant work had already been done.

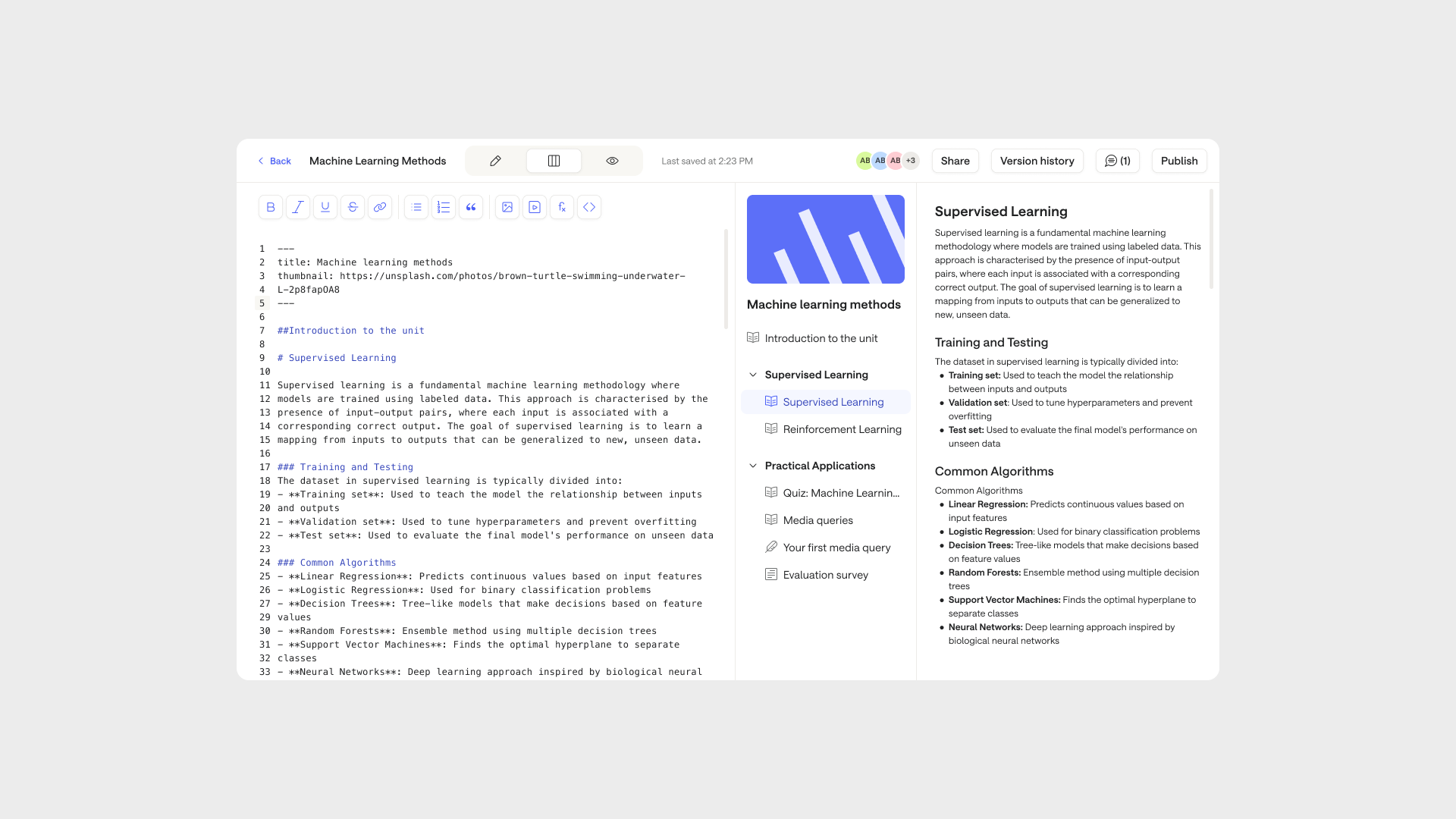

I designed an automated review layer that catches structural gaps, accessibility issues, and compliance misalignments before they reach a reviewer, so human time goes on judgement, not repetition.

Alongside this, I designed a content health dashboard that gave teams a holistic view of the catalogue: what was in good shape, what needed attention, and where quality was slipping. Rather than finding out a unit had problems when a learner hit it, teams could get ahead of it.

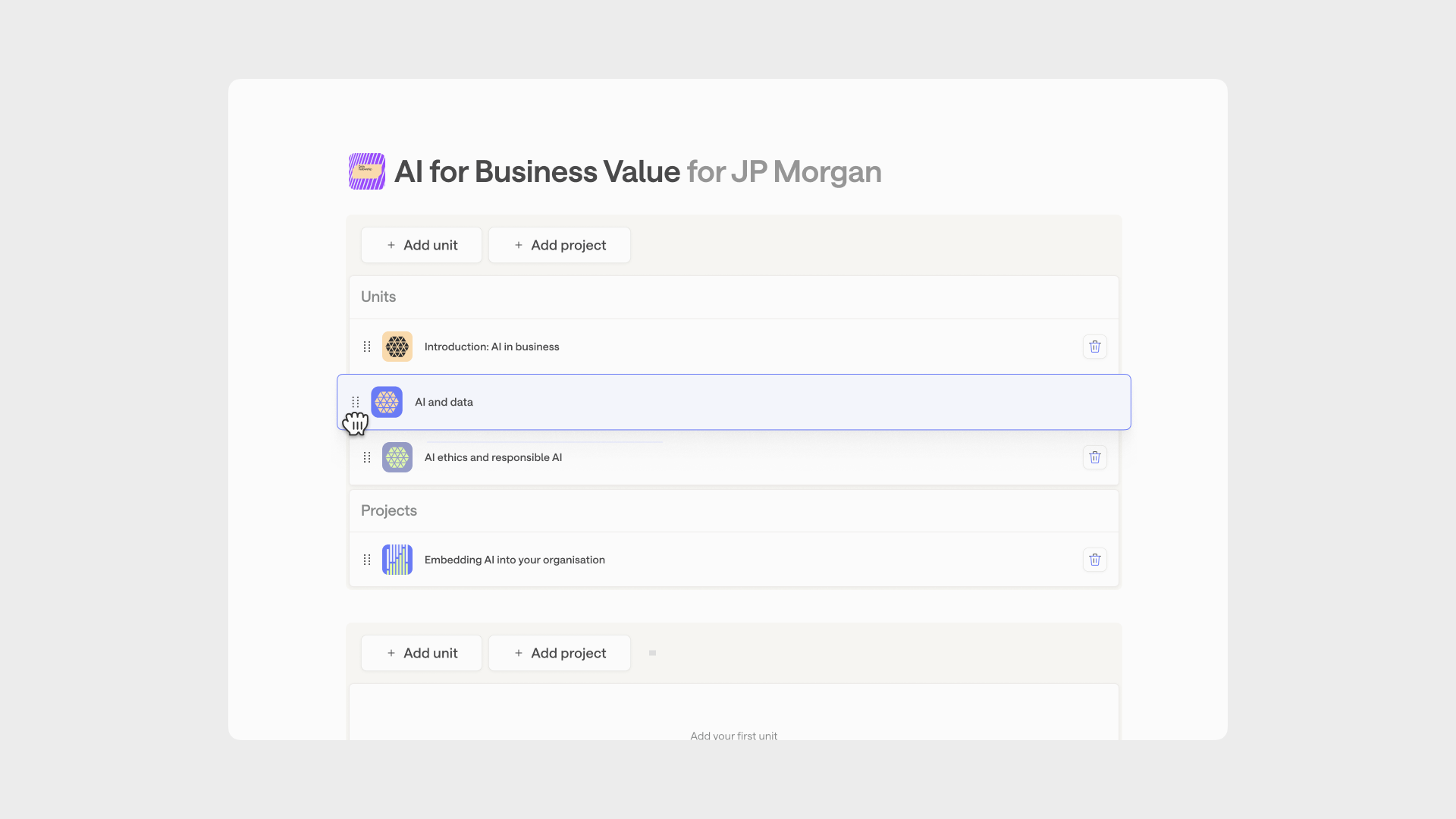

Building or changing a programme required engineering support. There was no existing UI for it. This made customisation slow and inflexible.

I set out to create a simple interface for pathway creation from scratch.

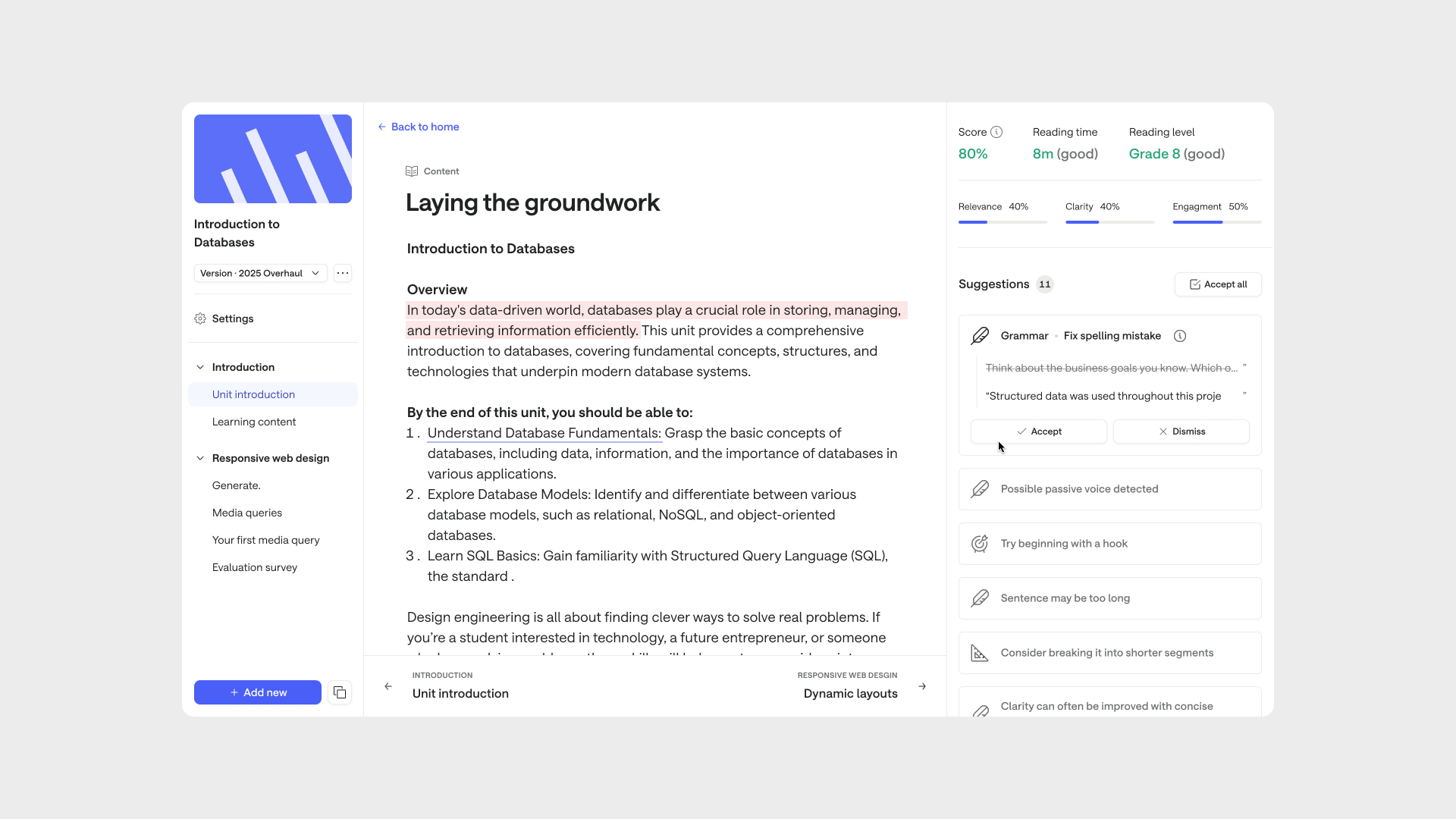

I designed a self-serve pathway editor that lets teams configure programmes directly, tracks compliance coverage in real time, and warns when changes introduce gaps.

One of the key design decisions was supporting customisation at three levels: company, cohort, and individual learner. A company could set the overall programme structure. A cohort could have modules adjusted for their industry or context. An individual learner could have specific content swapped or supplemented based on their prior experience. Each level inherited from the one above, so changes could be made without rebuilding from scratch.

Flexibility, with guardrails built in.

Getting this built required more than a brief. I built a working prototype and presented real scenarios to leadership. It became a priority immediately.

60% reduction in content authoring time per module. 40% faster quality assurance review cycles.

The bigger shift was capacity:

Moving content production onto Multiverse systems also changed the data model. Every edit, review, and QA check now generates data the business owns, creating the foundation for improving AI systems over time.

From collaborators

"A fearless designer who jumps into the unknown and gets the work done. Grab him if he's available — your team will thank you."

"Turns research insights into clear design decisions. Strong strategic thinking with close attention to detail."